How API Design Impacts Real Estate Data Performance

APIs are the backbone of real estate platforms. Poor design leads to slow data, integration failures, and scalability issues. On the other hand, well-designed APIs ensure fast updates, smooth workflows, and efficient handling of large datasets.

Key takeaways:

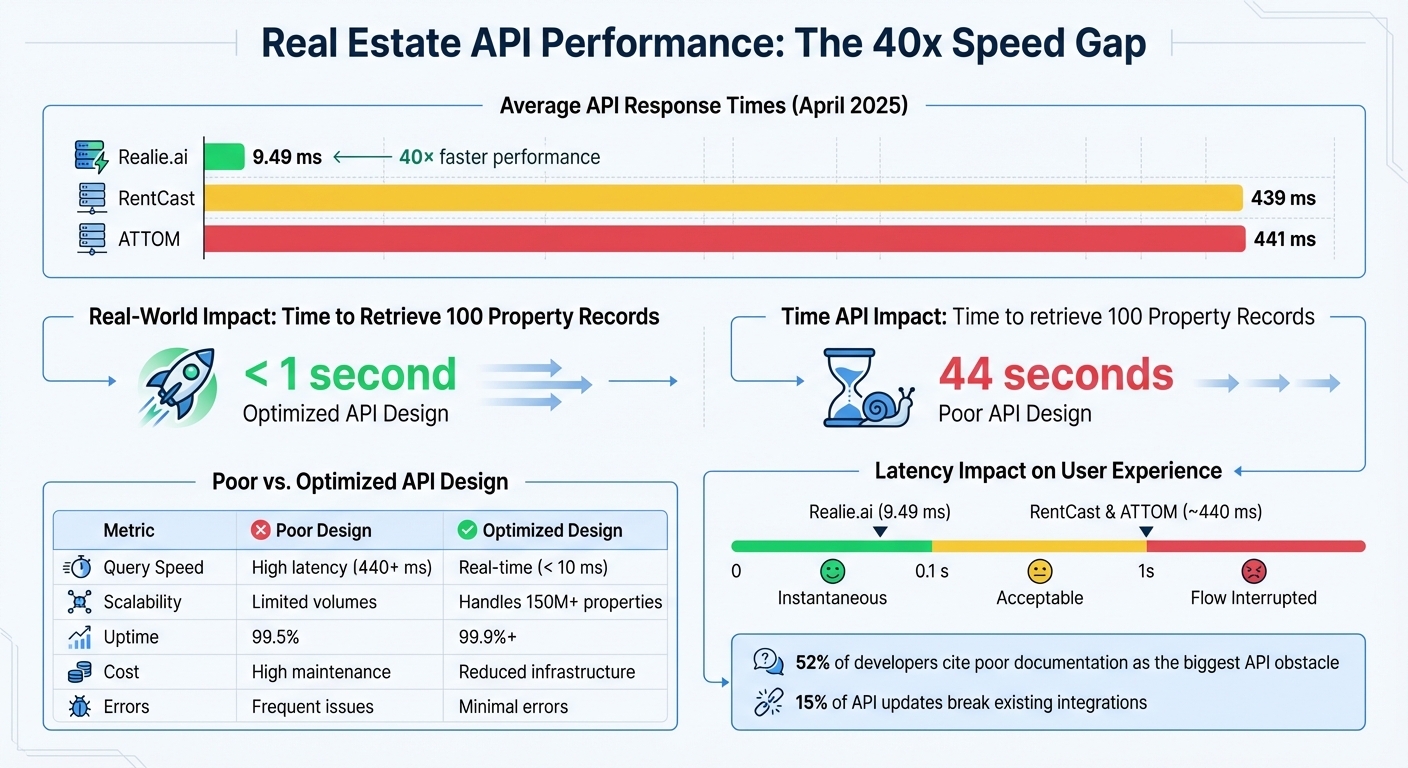

- Speed matters: Some APIs respond in 9.49 ms, while others take 440 ms - a 40x gap.

- Scalability challenges: Handling thousands of property records requires optimized architecture.

- Integration hurdles: Inconsistent data structures and poor error handling complicate third-party connections.

- Solutions: Use structured data, real-time updates, webhooks, and sandbox testing to improve performance.

A strong API design transforms end-to-end real estate platforms and point solutions into efficient, reliable systems that meet modern data demands.

Node JS API Development (Real Estate Marketplace)

sbb-itb-99d029f

Performance Problems in Real Estate APIs

API Performance Comparison: Response Times and Design Impact on Real Estate Platforms

When APIs are poorly designed, the flaws become clear quickly - especially in real estate, where platforms juggle property listings, market data, financial metrics, and third-party integrations all at once. The key challenges typically fall into three categories: slow data retrieval, scalability bottlenecks, and integration failures. These issues highlight the need for thoughtful design to address latency, scalability, and integration concerns.

Slow Data Retrieval and Latency

Latency in APIs - the time between a data request and its delivery - can disrupt workflows in real estate, where immediate access to property details, market trends, and comparable sales is critical. Research shows that users perceive interactions under 0.1 seconds as instantaneous, while delays over 1 second interrupt their flow [5].

The underlying causes often stem from technical shortcuts. Poorly designed APIs may expose raw database structures (e.g., using cryptic column names like usr_id), which slows down processing and can even crash servers or break client-side parsing [4]. Without optimizations such as parallel processing, batching, or caching, what should be quick operations can stretch into multi-second delays.

The performance disparity between API providers is striking. Some real estate APIs average just 9.49 milliseconds per request, while others take over 440 milliseconds - a 40x difference [5]. For instance, fetching 100 records sequentially might take under 1 second with a fast API but up to 44 seconds with a slower one [5]. As one lead architect bluntly put it:

"This API was designed in a week, and we've spent three years paying for it" [4].

Scalability Issues with High-Volume Data

As real estate platforms expand, they face growing demands to handle large datasets - thousands of property listings, transaction records, and market analytics. APIs that work fine for small-scale operations often crumble when faced with the volume of data seen in real-world scenarios.

Poorly designed APIs not only slow down data retrieval but also lead to fragmented workflows. Data often ends up scattered across tools like Excel spreadsheets, CRMs, and property management software. This fragmentation forces teams into manual data entry and reconciliation, creating bottlenecks and increasing the risk of errors. Without centralized systems that can automatically ingest and harmonize data, scaling operations becomes a nightmare. The industry is shifting toward "single source of truth" platforms to eliminate these inefficiencies and better manage the growing data demands of modern real estate.

Integration Gaps with Third-Party Tools

Real estate platforms rarely operate in isolation - they need to connect seamlessly with property management systems, analytics tools, financial software, and MLS feeds. However, integration gaps caused by poorly designed APIs create data silos and limit functionality. As data volume increases, these integration challenges only grow.

One major issue is inconsistent and fragmented data structures. Historically, real estate data has been spread across sources like IDX, VOW, and BBO. APIs that mirror internal database structures or use inconsistent naming conventions (e.g., userId in one endpoint versus user_id in another) frustrate developers and complicate integrations [1] [4]. On top of this, poor error handling - such as APIs returning "200 OK" for actual errors or vague responses like {"error": true} - forces developers to build custom error detection logic instead of relying on standard HTTP status codes [4].

High latency further complicates integrations. For example, when an API averages 440 milliseconds per request, developers must implement workarounds like parallelism, batching, or caching to maintain acceptable performance - adding unnecessary complexity and technical debt [5]. Additionally, 52% of developers cite poor documentation as the biggest obstacle when working with APIs, while about 15% of API updates break compatibility with existing integrations [4]. As Joseph Szurgyi, CEO of MLS Grid, emphasized:

"RESO absolutely has to be that voice on behalf of those who are in this realm – this part of the industry. We need to have a voice, and RESO should be that voice" [1].

API Design Principles for Real Estate Platforms

To tackle the performance challenges discussed earlier, API design for real estate platforms demands a fresh approach. The rapid growth of the real estate tech sector calls for APIs that can handle immense data loads while delivering fast, reliable results. These principles aim to address the issues of latency, scalability, and integration head-on.

Structured Data Storage and Optimized Queries

Modern real estate APIs rely on structured data organization, breaking down information into categories like Property Characteristics (the physical details), Financial and Transactional History (including valuations, liens, and equity), and Ownership Details (contact information). This setup transforms fragmented MLS and public record data into clear, standardized formats that are instantly accessible.

Well-designed APIs feature targeted endpoints. Instead of forcing users to pull entire datasets, they allow for specific queries, such as a "Property Search" endpoint for filtering millions of records or a "Property Details" endpoint for detailed profiles of individual properties. Many APIs now provide structured access to data on more than 150 million residential and commercial properties [3], ensuring users only receive the precise information they need.

This structured storage also enables automatic data enrichment. For example, entering a single address can instantly retrieve verified owner contact details through skip tracing. As Ivo Draginov puts it:

"A real estate data API isn't just a technical tool; it's a strategic asset that transforms raw property information into actionable business intelligence, giving companies the ability to move faster and smarter than their competitors" [3].

Real-Time Updates for Property Data

To address latency problems, top-tier API providers often deliver daily updates, widely regarded as the standard for actionable property data [3]. Many also use webhooks to push updates in real time, cutting down on unnecessary API calls and conserving resources.

Real-time integration can drastically reduce operational delays. For instance, lenders can automate the retrieval of lien data and sales history, shortening underwriting timelines from days to just hours. Leading providers often promise 99.9% uptime, ensuring users always have access to the latest data when making critical decisions.

Integration with Third-Party Systems

Overcoming integration challenges requires APIs to support seamless connections with third-party tools. This involves secure credential management, event-driven architecture, and continuous monitoring.

One example is a commercial real estate platform that implemented the BatchData API in late 2024. By automating its entity resolution process, the platform reduced the time needed for complex entity matching from 30 minutes per record to just seconds, significantly improving efficiency [3].

Platforms like CoreCast, which consolidate underwriting, pipeline tracking, portfolio analysis, and stakeholder reporting, rely heavily on API integrations. Features like sandbox environments allow for thorough testing before deployment, ensuring smooth connections to property management systems and other tools. These integrations eliminate workflow silos, creating a unified hub for property intelligence.

How to Improve Real Estate API Performance

Optimizing API Architecture for Scalability

Switching from traditional polling methods to webhooks is a game-changer for real estate platforms. Unlike polling, where the system constantly checks for updates, webhooks send notifications only when changes occur. This drastically reduces unnecessary API calls and lightens the server load[3]. Considering that over 56% of the real estate IT market relies on cloud-based infrastructure, this efficiency is crucial[3].

A layered architecture further enhances scalability by organizing property data into specific categories like Characteristics, Financial, and Ownership. This structure allows for targeted queries, avoiding the need to retrieve entire datasets. It’s a smart way to manage data for the more than 150 million residential and commercial properties in the U.S.[3].

For platforms handling high traffic, exponential backoff logic is essential. When rate limits are hit, this system retries requests at gradually increasing intervals, preventing server overload during peak usage. Testing in a sandbox environment helps identify weak points without affecting live operations. Together, these strategies build a more resilient and efficient API architecture. This foundation is essential for developing integrated platforms that unify fragmented property data.

Implementing Rate Limiting for Resource Management

Rate limiting acts as a safeguard, controlling the number of requests a client can make within a set period. This ensures no single user overwhelms the system, maintaining consistent performance even during high-traffic times[3]. Without it, servers risk being overloaded, leading to service disruptions or temporary access blocks.

A critical aspect of rate limiting is proactive monitoring. By tracking API usage through real-time dashboards, developers can pinpoint inefficient queries and predict infrastructure costs before they spiral out of control. When applications encounter rate limit errors (HTTP 429), implementing retry logic ensures smooth handling of these situations[3]. As Livify aptly puts it:

"Performance is a feature, and for APIs, it's the most critical one. It's not just about raw speed; it's about consistent, predictable, and scalable responsiveness under load."[6]

Comparison Table: Poor vs. Optimized API Design

| Metric | Poor API Design | Optimized API Design |

|---|---|---|

| Query Speed (Latency) | High latency | Real-time or near-instant |

| Scalability | Limited to small volumes | Handles large datasets |

| Cost Efficiency | High maintenance costs | Reduced infrastructure costs |

| Error Reduction | Frequent issues | Minimal to no errors |

Measuring the Impact of API Design on Data Performance

API architecture isn't just about efficiency - it’s about measurable results. By tracking key metrics, businesses can see how well their APIs are performing and how those improvements translate into better outcomes. Metrics like average latency, which reflects typical performance, and P95 latency, which captures the slowest 5% of responses, provide a clear picture of API effectiveness. For platforms like real estate tools that handle portfolio analysis, these metrics can mean the difference between smooth operations and frustrating delays.

Throughput is another critical measure. It highlights how efficiently an API can handle bulk data requests. For instance, Realie.ai tested their optimized API against competitors in April 2025. Co-founder & CTO Alex Franzen led a benchmark study comparing Realie’s API to industry leaders. Realie delivered an average response time of 9.49 milliseconds, significantly outperforming RentCast and ATTOM, which averaged 439 ms and 441 ms, respectively. This 40× speed advantage means retrieving 100 records takes less than 1 second with Realie, compared to 44 seconds with slower APIs[5]. Such low latency translates to a smoother, faster user experience.

APIs also play a vital role in automating tasks like risk assessments. For example, they can flag liens, mortgages, or pre-foreclosure filings, cutting underwriting timelines from days to hours[3]. Additionally, data standardization - such as using clear naming conventions to differentiate "total" square footage from "living" square footage - prevents costly misinterpretations that could derail financial models[7].

Another essential metric is uptime and availability. Reliable APIs ensure consistent data integration, with industry standards targeting at least 99.9% uptime[3][7]. However, some providers, like ATTOM, report a 7-day average uptime of 99.5%[5]. For platforms like CoreCast, which handle everything from underwriting to portfolio analysis, even brief outages can disrupt critical workflows and decision-making.

The concept of a performance budget ties API speed directly to user experience. Data retrieval under 0.1 seconds feels instant, while delays over 1 second can disrupt user flow[5]. APIs with response times under 10 milliseconds leave plenty of room for complex calculations and analysis. On the other hand, APIs approaching 400 ms per call require additional strategies like parallelism, batching, or caching to maintain acceptable speeds, which adds complexity and cost[5].

Conclusion

API design lays the groundwork for how effectively a real estate platform can deliver fast, reliable data. A well-thought-out architecture shapes how platforms handle property data, integrate with third-party systems, and scale to meet growing user needs. As Turan Tekin, Senior Director of MLS and Industry Relations at Zillow Group, put it:

"Things are going really, really well in API land" [1].

By adopting microservices architecture and RESTful design principles, platforms can scale specific functions independently. For example, managing property searches during peak traffic or processing images for countless listings becomes more efficient without slowing down the entire system. A great illustration of this is Bridge Interactive's success in migrating over 50 data consumers to a single data feed by late 2022 [1] [2].

The advantages of optimized API strategies are evident in platforms like CoreCast. As a comprehensive real estate intelligence tool, CoreCast integrates modern API design to streamline complex processes. Users can underwrite various asset classes, track deals through different pipeline stages, analyze portfolios in-depth, and create branded reports - all within one seamless system. This integration not only simplifies data management but also ensures smooth workflows, from underwriting to stakeholder reporting.

The difference between a responsive API and a sluggish one is huge. Faster APIs enable advanced calculations, automated evaluations, and real-time updates - critical for real estate professionals managing large portfolios. CoreCast embodies the API-first approach, combining speed, scalability, and seamless integration to deliver exceptional performance.

Platforms that prioritize an API-first strategy, adhere to RESO standards, and continuously monitor performance metrics are better equipped to meet the demands of today’s data-driven real estate industry. These architectural decisions will shape which platforms succeed in the ever-evolving digital landscape.

FAQs

What makes a real estate API “fast” in practice?

A real estate API is deemed "fast" when its response time is under 100 milliseconds. This speed allows for real-time data access, ensuring a smooth and efficient user experience. On the other hand, delays longer than 3 seconds can frustrate users or even cause them to abandon the platform entirely. Keeping latency low is crucial for maintaining performance and user satisfaction.

Which API metrics matter most for real estate data performance?

When evaluating real estate data APIs, certain metrics play a crucial role in determining their effectiveness. These include:

- Response Times: How quickly the API delivers data is critical. Faster response times mean smoother user experiences and quicker access to information.

- Request Limits: APIs often have usage caps, so understanding these limits helps ensure your application can handle the required data volume without interruptions.

- Data Update Frequency: Regularly updated data ensures that listings, prices, and other details are accurate and current, which is vital for maintaining user trust and platform reliability.

By monitoring these metrics, real estate platforms can ensure they process and integrate data efficiently while maintaining a high level of performance.

When should a platform use webhooks instead of polling?

Webhooks are ideal for scenarios where real-time updates and instant notifications are necessary. They immediately push data whenever an event happens, which not only minimizes latency but also eases the burden on servers. This efficiency makes webhooks a better choice than polling for operations that depend on timely responses.